Serverless Testing, Part 3: Simplify Testing with SOLID Architecture

AWS Serverless Community Builder, Serverless Architect and Evangelist, creator of serverlessdna.com

Serverless Testing, Part 1: What I forgot at the beginning starts with what I forgot when I started building serverless solutions. It encourages bringing back software architecture principles to your Serverless code and focuses on the Dependency Inversion Principle - something all developers should understand.

Serverless Testing, Part 2: SOLID Architecture for Lambda covers SOLID software architecture principles and how it applies to Serverless. It also introduces how to implement Hexagonal Architecture using software interfaces and adapters as a software abstraction to isolate your business domain code from the details of interacting with the cloud.

In this final piece of the puzzle, I will cover how implementing your business domain logic with Hexagonal SOLID software architecture principles will simplify testing of your code and do away entirely with mocking AWS SDK calls to the cloud. This article focuses on unit testing, integration testing and end-to-end tests for the example architecture presented.

Testing Serverless systems is not a "now we have to simulate the cloud problem".

Testing of code destined for the cloud is a confusing space with many opinions and tools being built to support all manner of local, remote, semi-remote and local-remote testing of your code. This article is born from my continued frustration with developers wanting to replicate the cloud locally so they can run their entire solution end to end on their desktop. This is more a desire to continue developing the way they have always developed rather than looking to change development practices to suit the changing deployment landscape that Serverless brings to us.

A Quick word on "Mocking"

A frustration of mine with Serverless developers is watching them waste time mocking AWS SDK Calls. Mocking AWS SDK calls is never straightforward and usually requires several additional libraries to make it an effective technique. Combined with this you may have junior or less cloud-experienced developers on your team who struggle with mocking AWS Calls, mainly because they don't understand the nuances of the API call responses.

Please do not mistake this to mean that I think mocking is something we should eliminate. Mocking is vital for good unit testing and is something I strongly encourage and is needed. However, not for AWS SDK Calls - we can use better software architecture to remove the need for mocking AWS SDK calls. In the remainder of this article, I will explain how better software architecture will improve and simplify your Serverless testing activities and enable your team to move faster.

The GitHub Example Project

This article is supported by an example python lambda project that applies the testing techniques discussed in this article; You can find it on my GitHub here. This article does not go into every aspect of this project, it is used only as a reference to the testing strategies introduced within this article.

A Few Notes on the Project

The sample project is focused on general Serverless testing for synchronous API services with some asynchronous components. It does not cover all the possible testing scenarios for serverless and is intended as a general example to get started with Serverless testing more quickly and for a majority of Serverless use cases.

The README.MD file has notes on the project structure and elaborates on the directory structure and how the project is built.

AWS CDK is used to deploy the code to your Cloud using the Python language

The Python folders are structured in a very specific way to ensure the lambda code directory supports the PythonFunction CDK construct which uses docker to package the code to ensure binary packaged dependencies will function correctly when deployed.

Minimal scripting magic for building and deploying the project working within the existing folder structures at all times. This minimises the need for build magic where some developers build scripts to move source files around for lambda packaging.

Relies on poetry for packaging and uses a specific convention for installing dependencies in groups for each of the lambda functions present in the project. This will ensure dependencies are packaged only where required and will ensure faster start-up times which is important for AWS Lambda.

Uses the AWS Lambda Powertools for Python. No other reason for using this library other than all projects should. If you don't know what it is click the link or check out my talk from Melbourne Serverless Meetup: What I love about AWS Lambda PowerTools for Python.

Pytest is used for unit testing and for integration and end-to-end test modules tear up/down mechanisms are used to create ephemeral stacks for testing. I borrowed this testing technique from the AWS Lambda Powertools for Python project - I do think you should check it out if you haven't already!

The code within the GitHub project is not a production-ready sample, so use it with caution and modify it to meet your production system requirements.

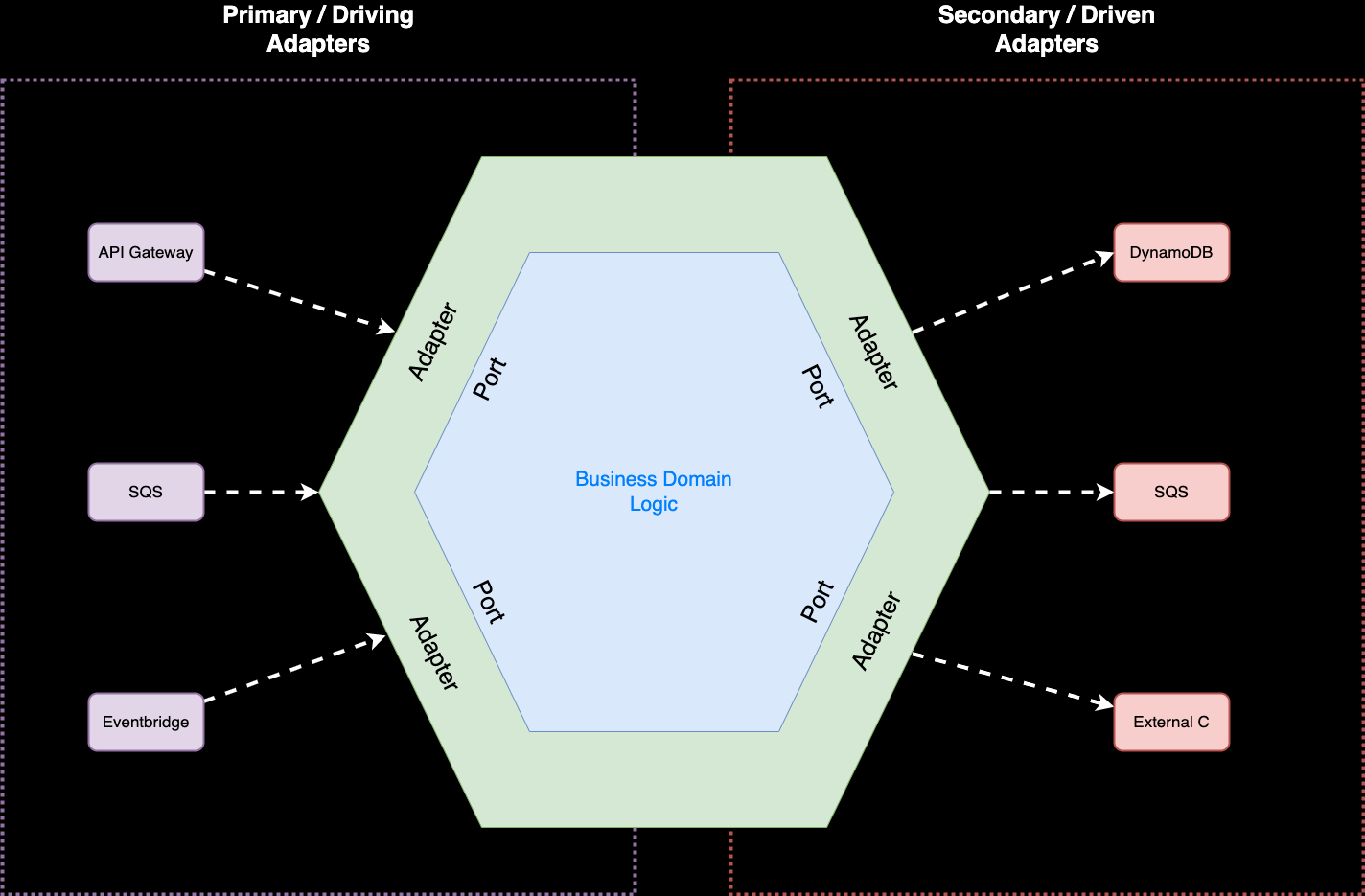

Better Architecture with Hexagons

I wrote about the Dependency Inversion Principle, SOLID Principles and Hexagonal architecture in the previous parts of this series. Hexagonal architecture is an architectural pattern that splits your system into distinct components (not layers) and introduces a combination of Ports and Adapters which separate your business domain logic from external resources such as databases and cloud services like API gateway, Eventbridge, SQS and DynamoDB. The hexagon shape itself is unimportant and is used to define where the adapters exist within the software. In Alistair Cockburn's original article, the primary motivation for this pattern was to remove the bleeding of functionality between software layers in more traditional, monolithic solutions which countered all the advantages of cleanly layered software with isolation boundaries. By introducing smaller, more specific interfaces used by business logic to achieve a business outcome, the abstraction to external services is more clearly defined and less ambiguous using the Hexagonal architecture idea.

I like the way this architecture is laid out as it helps to quickly understand what are the inputs, where the processing is and the outputs which maps very clearly to how AWS Lambda code works in the cloud.

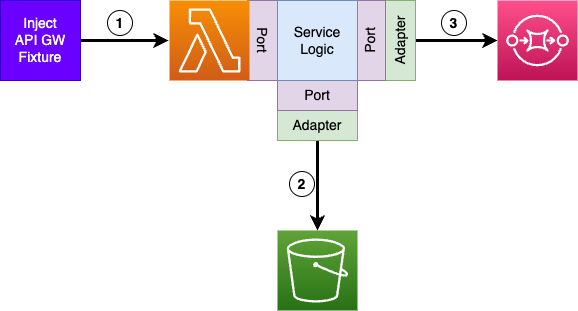

On the left are the driving adapters for the AWS event sources that drive the event data into our business domain logic through the defined ports (interfaces). On the right are the external cloud resources driven by our business domain logic - where data is stored or pushed for further processing. Our business domain logic interacts with these driven services through interfaces where the adapter implementation makes AWS SDK calls to interact with the cloud resources.

Testing: Unit, Integration and End to End

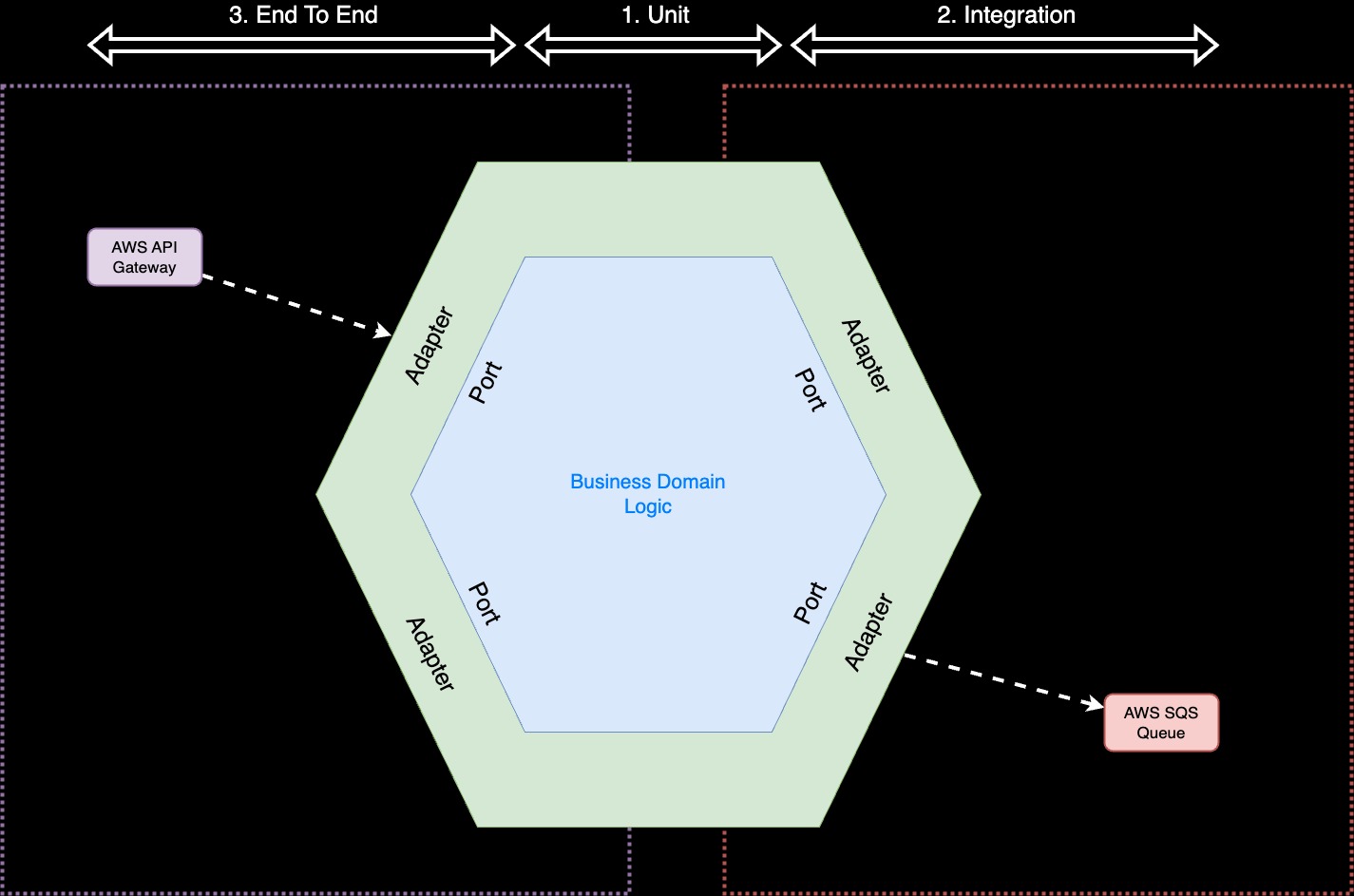

Combining all these elements can simplify the testing of business domain logic and remove the need to create Mocks for AWS SDK calls. Testing is split into three main areas - Unit, Integration and End to End testing. Unit testing covers the central Business Domain logic, integration testing covers the driven ports side of the hexagonal architecture while the end-to-end tests start with the driving side and continue across the entire architecture.

Your business domain or Service logic is covered by unit tests. With hexagonal architecture, any AWS SDK calls will be isolated by the adapters, behind the ports meaning there will be no AWS SDK calls within your business domain code, so there is no need to introduce AWS SDK mocking libraries. This will simplify your testing and give your developers more time to focus on delivering more value.

The interfaces and adapters are tested using automated integration and end-to-end tests run when your code is deployed to the cloud using CI/CD automation tooling. With tests running in the cloud, we don't need to mock AWS SDK calls since we will be testing with real services. Combining these activities also removes the notion that you need to run the entire solution locally in a mock cloud environment on your desktop so you can set break points in your IDE to help catch errors or see the state of variables.

You can achieve local debugging by running your unit tests in debug mode, fully exercising your business logic, and this is a workflow I encourage all serverless teams to adopt. All you need to do is craft one or more test fixtures for specific test cases and add these as unit tests to your existing test cases. You can execute tests using your IDE in debug mode and set up breakpoints in the exact spot you want to step through. This not only enables local testing with no mocking and no cloud simulation but also ensures you expand your test cases with additional cases that are more real and not just there because we need test coverage. With SOLID Hexagonal architecture in place if you interact with reading data from a database - this will happen in a Port and Adapter. So you can create a fake adapter to return the data your test needs to create the correct outcome.

A Reminder: The Core Principle of Lambda

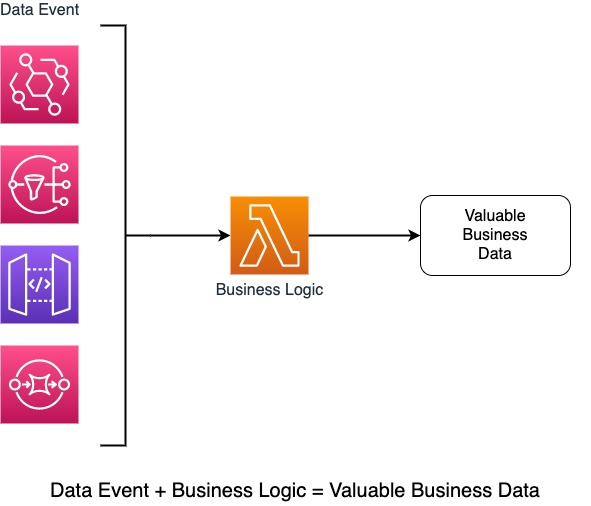

As we gain more confidence in designing and building serverless systems with more mature managed services on the cloud, we are starting to create complex, distributed systems that scale, run fast, and efficiently. As we travelled to this point, learning about how each of the AWS primitive services work; we have forgotten the principle of how Lambda functions are intended to work.

In a nutshell, Lambda functions exist to add value to data events entering our system and then forward the result to the next component in the solution chain. This next component could be anything and may involve other managed services such as AWS SQS, SNS, EventBridge or API gateway. At the end of the day, to unit test our business logic, we need to pass a test data event and check the data forwarded to the next component in our solution chain after our business domain code has run. Doing this does not involve mocking AWS SDK calls, nor does it require us to run a mock SQS service locally so we can test end to end.

Testing Serverless Architectures

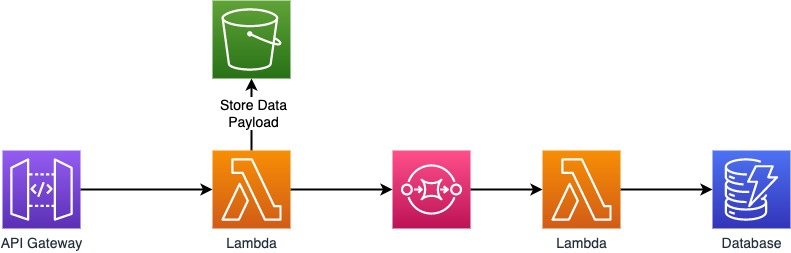

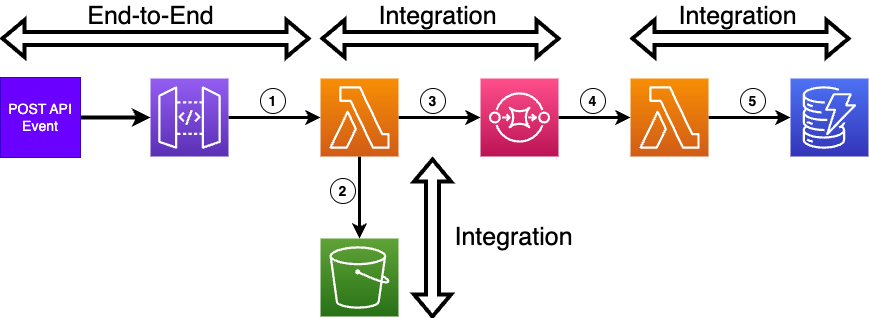

Let's look at an API gateway interface that stores events into an S3 bucket and then pushes a transformed event into an SQS Queue. The data pushed to the SQS queue is processed by another Lambda function and is inserted into a DynamoDB table. A classic serverless pattern that is widely used as a store and forward mechanism.

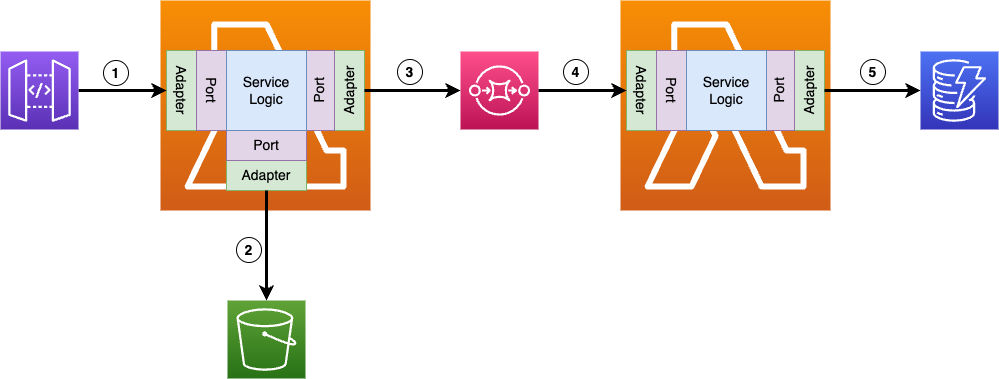

Taking the above architecture, we can isolate and identify the testing boundaries to simplify the creation of unit tests and eliminate mocking of AWS SDK calls altogether. Let's overlay the hexagonal architecture concepts of ports, adapters and business logic to show how our Service logic is encapsulated.

From the architecture overlay, everything within the Lambda symbol represents the unit test boundary; The outbound arrows from each lambda box represent the integration test boundary (2, 3 & 5) and the entire numbered flow (1 - 5) represents the end-to-end test boundary. The overlay also emphasises the core principle use of lambda: Data Event in (1 & 4) + Lambda Processing Logic = Valuable Business Outcome (2, 3 & 5).

Unit Testing Lambda Code

Using the above boundaries enables you to define where code should live. If we adhere to the hexagonal architecture principles outlined in my second article - Our Service logic will be contained in a service class (or function) which is called by the code in your actual lambda handler. The lambda handler function represents the adapter portion of data event input at step 1 and all it will be doing is validating the input and mapping it to the ports (interfaces) of your service class.

The following code snippet is taken from the GitHub project and represents the first lambda handler function which is triggered by an AWS API REST Gateway event. The project uses the AWS Lambda Powertools for python to assist with handling the AWS Lambda event (I did add a few comments in the code and moved the handler for clarity).

The post_event function which handles the API route for the inbound data maps the API body details to the process_event method of the event_service class. This is a nice way of isolating the different software components that make up your lambda handler and will ensure each of the components are individually testable. This ensures unit tests are small, concise, and easy to understand. This also enables your service logic to be re-used in other AWS Serverless Service contexts without making any changes and means faster development time and less change from a unit test perspective if your solution needs to pivot or change, which is often the case as requirements and volumes change.

Using better software architecture will simplify your Lambda handler function code - don't put everything in your lambda handler unless it is super simple. Any specific business logic should be isolated within a service class or function so that it can be transportable, easier to test and quicker to understand.

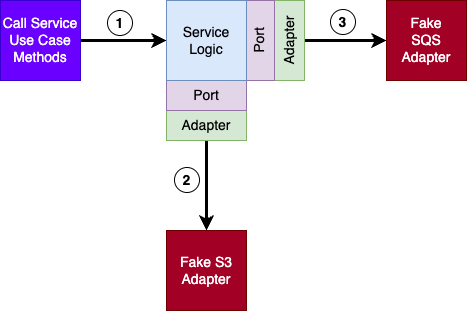

The unit tests for this API service are focused on the EventService class which is defined in the adapters folder in the service.py file and represents the central blue section on the hexagonal architecture diagram earlier. Unit tests are about testing our business domain service logic, not testing the Lambda handler function. The EventService is responsible for 2 actions - storing the event in an AWS S3 object and pushing the event data into an SQS queue for processing by another Lambda function. The Event Service uses Hexagonal architectural techniques and uses 2 ports - FileStoragePort and MessagePort. To unit test the EventService we will need to provide fake or mock implementations for these two ports, mocks are still used but we are using specific mocks which do not require AWS knowledge or external mocking libraries.

This diagram shows how our EventService logic calls the FileStoragePort (Fake S3 adapter) and MessagePort (Fake SQS Adapter) which are both replaced by fake implementations to support unit testing. The complete unit test is shown in the test_event_service.py file in the sample project. The constructor of the EventService allows for overriding the adapter implementations that are used which enables fakes to be used for testing purposes where needed. This is a classic Dependency Injection technique where dependencies of a class (or function) are provided during creation so that implementation can be changed when desired.

Some people will be thinking - what about unit testing the Lambda handler function? In this project have opted not to do that, sure it added to unit test coverage but the lambda handler code is very simple and will be tested as a part of the integration tests we go through next. I have some strong opinions when it comes to test coverage and feel that many hours of developer time are wasted on creating tests that deliver no purposeful quality improvements. If the code is critical to your business function you should be writing unit tests. If the code adds no real business value and is acting as an enabler or bridge to your business function then you need to decide as a development team if testing is useful and meaningful.

A Word on Code Coverage Metrics

Code coverage percentage metrics matter for some projects, but not all. In my experience, attaining a goal metric for code coverage with automated testing adds very little to the overall quality of the software you deliver and does not add to an equitable increase in confidence that the code is correct. I believe it wastes valuable developer time and reduces quality because the team are no longer focused on delivering your business value, they are focused on making the code coverage percentage get to the right value or exceed it. In some cases, they will create tests that are invalid just to achieve the metric goal or will focus on the low-hanging fruit and miss out on tests on critical business functions. This is why I do not like mandatory code quality metric targets - they do not add value or increase quality, they just consume development time for no business value.

Automatic tests need to add to your overall product quality and be valuable.

I believe code coverage is vital when creating shared code libraries that are used by more than just your project or that are shared publically or within your organisation, these should strive for as much test coverage as possible since the testing adds value and confidence for all users of your library.

Integration Testing Example

In the sample project on GitHub all of the integration tests can be found in the tests/integration folder with subfolders for each service to be tested. We will remain focused on the event_api service for integration testing.

For integration testing, we are deploying actual AWS Cloud service components which will be tested in the cloud to ensure they integrate correctly. The actual deployment is handled by the pytest module conftest.py which will ensure the components are deployed before executing the integration tests and remove the Cloud Formation stack when the test execution is complete. The Integration test code will use one or more test fixtures representing the real AWS API Gateway event the lambda code will receive when integrated with the API gateway. The test we are writing is focused on the outbound arrows in our architecture (2 & 3) and nothing else. The test infrastructure will deploy the Lambda, S3 bucket and SQS queue and then test the lambda through a synchronous execution using the AWS Lambda execution API call.

The idea is to break down your integration tests into small chunks that can be completed synchronously, which makes writing tests for asynchronously triggered Lambda calls (e.g. triggered by AWS SQS) much simpler and easier to deal with since we no longer need to poll for results, this is why we set up integration tests in this way. The following example shows the complete integration tests for the Event API component triggered by the AWS API gateway. With our lambda and resources deployed to the cloud we are now testing our AWS SDK api calls within our adapters and will be sure that if these tests pass, they will continue working as we expect.

Testing AWS SDK API calls in the cloud means you are testing all the cloud integration including configurations such as IAM Roles and Policies along with your actual Adapter code.

Lines 17 - 30 outline test fixtures which retrieve actual cloud resource details required for the AWS SDK calls we will make to interact with these services. When the test calls the AWS Lambda execution API passing the AWS API Gateway test fixture (line 39) it is a synchronous call so when we receive the response we can be sure all integrated actions have been completed so we will be able to immediately check the S3 bucket for the desired outcome (lines 53 - 56) and check the message pushed to the AWS SQS queue is correctly formatted for the next service to consume (lines 58 - 67). This makes testing simpler and more easily understood and reasoned about by developers of all levels. The idea of running tests in this way is to enable your team to move faster and write smaller, more concise integration tests.

End-to-End Testing Example

Testing Serverless Architectures end-to-end is probably the most difficult part and has the most obstacles - especially when one or more asynchronous services make up your solution. In the sample project and testing, I have tried to keep all testing straightforward using synchronous mechanisms to minimise testing complexity and developer cognitive load. Given we have tested the event API Lambda code in our integration testing using a real AWS Gateway event and we know it correctly interacted with downstream services the end-to-end test shouldn't need to test these components again.

Designing tests to execute synchronously allows your developers to move faster and your testing is less complicated and easier to understand and reduces false positive results for asynchronous polling

So the end-to-end component is all about deploying the API gateway and the API lambda code along with dependent downstream services. This means we can limit the testing to the API boundary and ensure the API gateway integration works correctly by giving us the response we are expecting according to the API contract. You could consider this an API contract test as well and that is really what we are doing in this example and is what the end-to-end component is really about - does the system behave correctly for external users.

Applying the End-to-End as shown in the above architecture diagram means we progressively test each of our components in the cloud in a synchronous fashion. The final part is to test the API gateway triggering Lambda and that we get the correct response for each of our test cases. In our example, we have a fairly simple API and only a single test to illustrate the strategy.

The end-to-end test uses the same fixture interface to get the real API gateway address for the deployed API gateway infrastructure.

I have focused my testing examples around an API gateway-bound service design which is one of the more common serverless system implementations. It is by no means the only type of implementation and trying to go into more detail about every aspect of Serverless system testing would take far more than I have written here. I have focused on a specific example as a means to help you understand how you can achieve automated testing of a Serverless Architecture in a synchronous fashion and without mocking AWS SDK Calls. The idea is to be able to apply testing that makes sense for your projects and enable your developers to move quickly and empower new developers to also get going fast!

Through the use of SOLID Hexagonal software architecture and synchronous testing techniques in the cloud for integration and API Contract testing, you can remove the need for using AWS SDK development mocking libraries and empower new developers to get started and understand your business code faster and easier.

GitHub Code Disclaimer

The examples presented are focused on the Python programming language and the code presented uses object-oriented programming. Python is not the only language for coding with AWS Lambda and the solution samples may not suit your preferred language.

The code presented is not considered production ready and should not be used in production without ensuring it meets your development standards and code quality requirements.

Further Reading

I strongly recommend reading Yan Cui's blogs and also checking out his new course on Serverless testing which is in development (links below).